Submission by Faran Zafar

Hack #6: Zed · Zed

F

Faran Zafar

30 Apr, 09:42

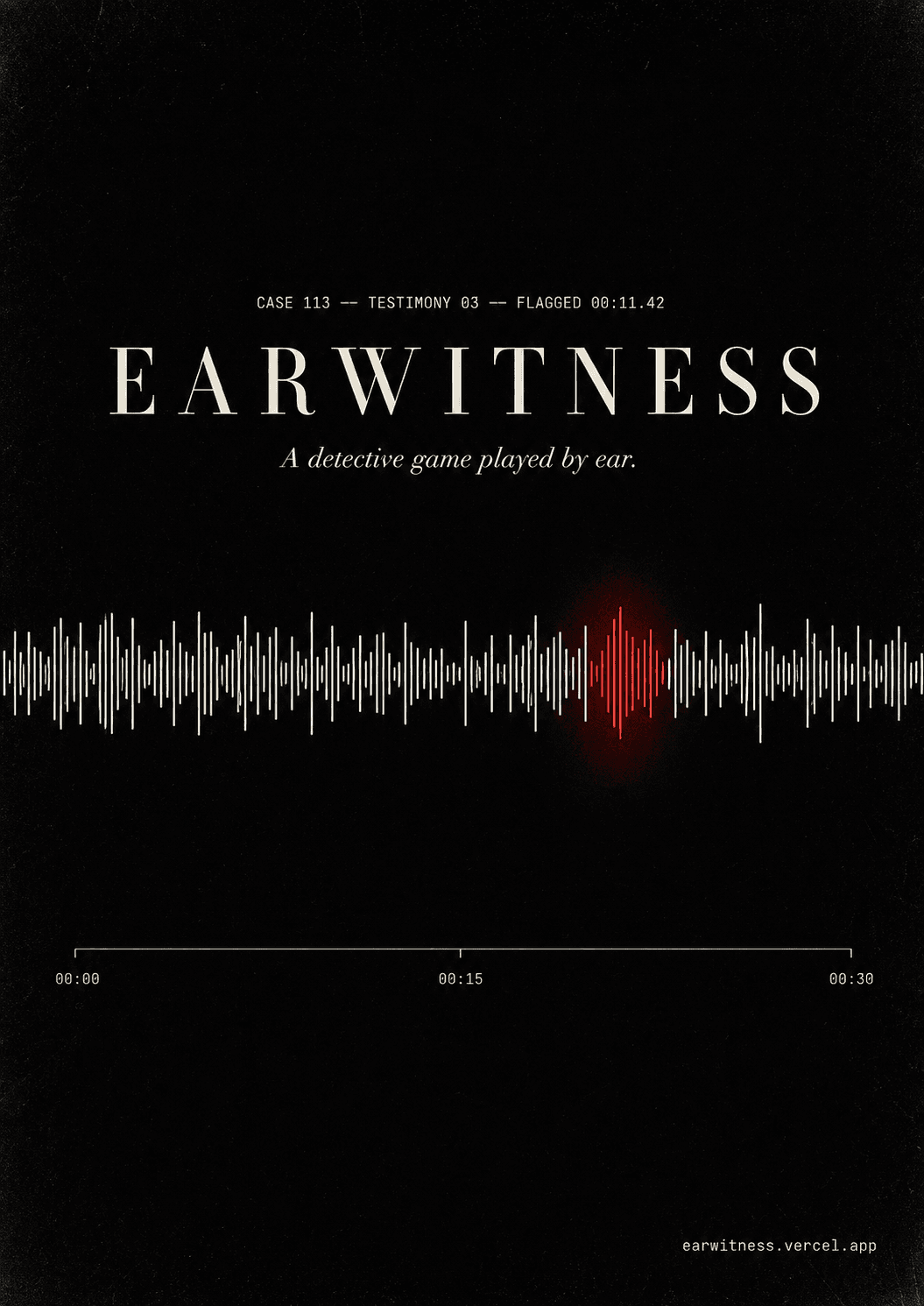

Earwitness is a detective game where the gameplay is purely auditory. There is no combat, no exploration, no inventory — just four AI-generated suspect voices and the suspicion that one of them is lying. The premise. Each case opens with a forensic case file: victim, location, time of death, and four suspects. Players listen to each suspect’s recorded testimony, watch the transcript light up word-by-word as they speak, and flag the lines that sound suspicious. Once all four are heard, the player accuses the killer and identifies which line gave them away. A correct accusation triggers a full ElevenLabs-generated confession; a wrong one ends with an innocent person executed. The core mechanic. Lying lines are generated with lower voice stability and higher style settings in ElevenLabs Multilingual v2 — producing a subtle vocal tell that real human listeners can detect. The player isn’t guessing; they’re catching micro-cadence shifts the same way a detective in an interrogation room would. The textual layer. Every claim-dense line carries an evidence tag — ALIBI, TIMESTAMP, WITNESS, MOTIVE, ADMISSION — color-coded inline in the transcript. When a player flags a line, the notebook surfaces any cross-suspect contradictions: another suspect’s testimony, displayed in italic serif, with a short forensic note and a one-click jump to that exact moment in the other recording. Detective work earns the cross-reference, not handouts. What’s there. Three full cases: a 1960s-coded estate murder (Vance), a 1.2M-follower influencer drowned at her own brand-launch party (Wipeout), and a Hinge first date ending in an elevator (Double Tap). 112 individually-generated MP3 clips, 16 distinct ElevenLabs voices, 8 cross-suspect contradictions, full per-line stability tuning on the killers’ lies. The build. Built in Zed in 24 hours. Static HTML + React UMD + Babel — no build pipeline. Deployed on Vercel as a single static site. Audio generation pipeline is a 90-line Node script that reads case data, hits the ElevenLabs API per line, and writes per-suspect manifests the audio engine consumes at load time. Try it: earwitness.vercel.app

4 participants0 audience