Submission by Catxi

Hack #8: Cursor · Cursor

C

Catxi

14 May, 10:27

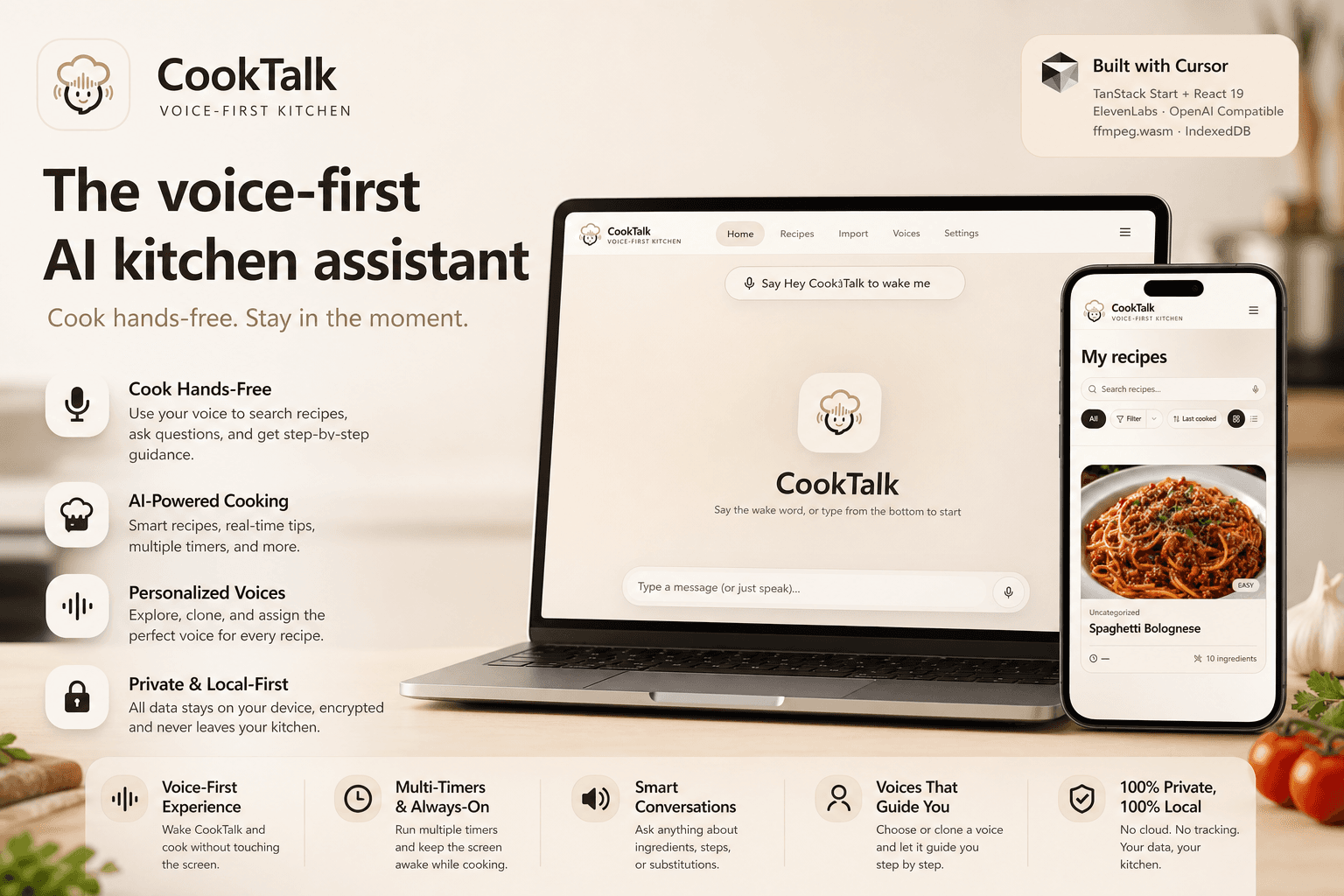

Hands covered in grease—yet you still have to tap the screen? Busy stir-frying, only to constantly pause just to check the next step? Enter CookTalk: a voice-first AI kitchen assistant. You speak; it executes. We developed the entire application using the Cursor platform, leveraging its AI-assisted coding capabilities to efficiently construct a full-stack architecture built on TanStack Start and React 19. We also deeply integrated the ElevenLabs API—not only for generating speech for recipe searches and cooking conversations but also to enable voice library browsing, voice cloning management, and the ability to bind specific voices to recipes for step-by-step read-aloud guidance. This ensures that for every dish, you can be guided through the cooking process using a voice you truly love. Simultaneously, we utilize OpenAI-compatible interfaces to handle tasks such as converting videos into structured recipes, facilitating AI-driven recipe conversations, and generating cover images. Video and audio extraction are processed locally within the browser using ffmpeg.wasm, and all data is encrypted via AES-GCM before being stored in IndexedDB—adopting a "local-first" approach throughout the entire workflow that requires no backend server. What makes CookTalk truly unique is how it takes the concept of "keeping your hands free in the kitchen" to the absolute extreme: featuring parallel timers, an always-on screen, and wake-word activation, users can navigate the entire journey—from searching for a recipe to completing a dish—without ever having to touch the screen. It truly elevates voice from a mere "assistive tool" to the "primary mode of interaction," allowing you to shift your full attention back to the pot on the stove.

2 participants1 audience