← All hackathons

$22

$22

Ended

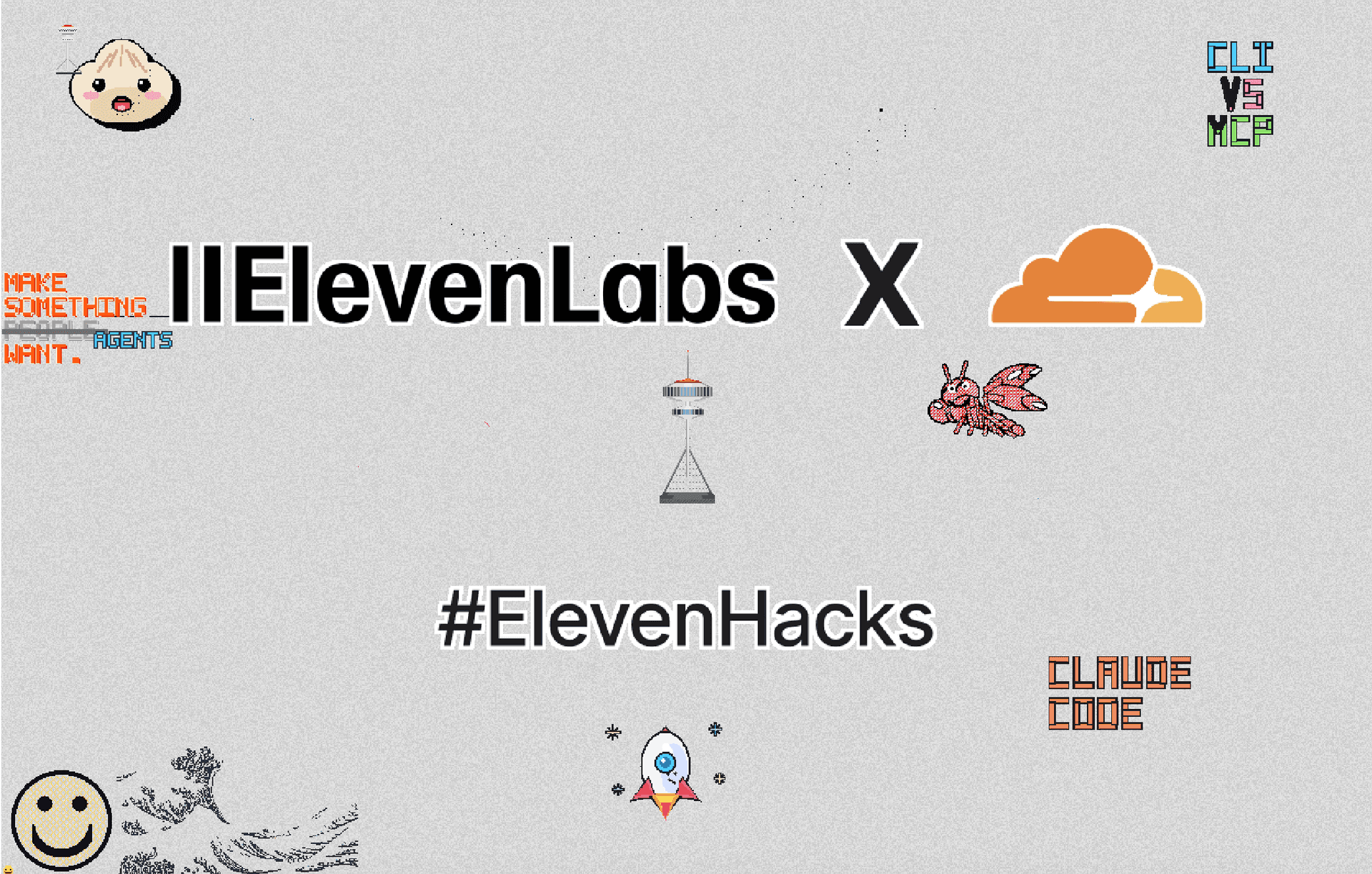

Hack #2: Cloudflare

Challenge

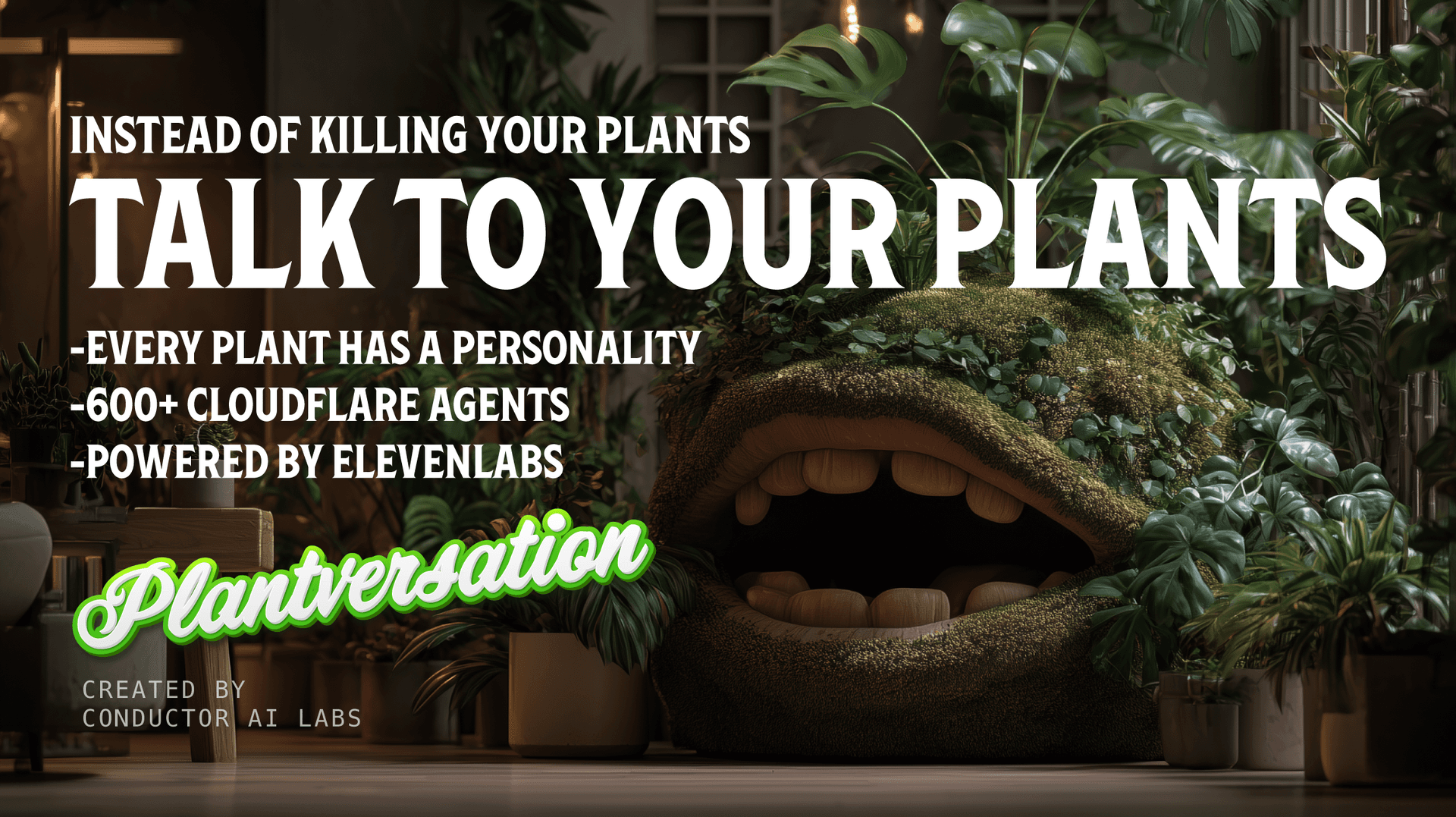

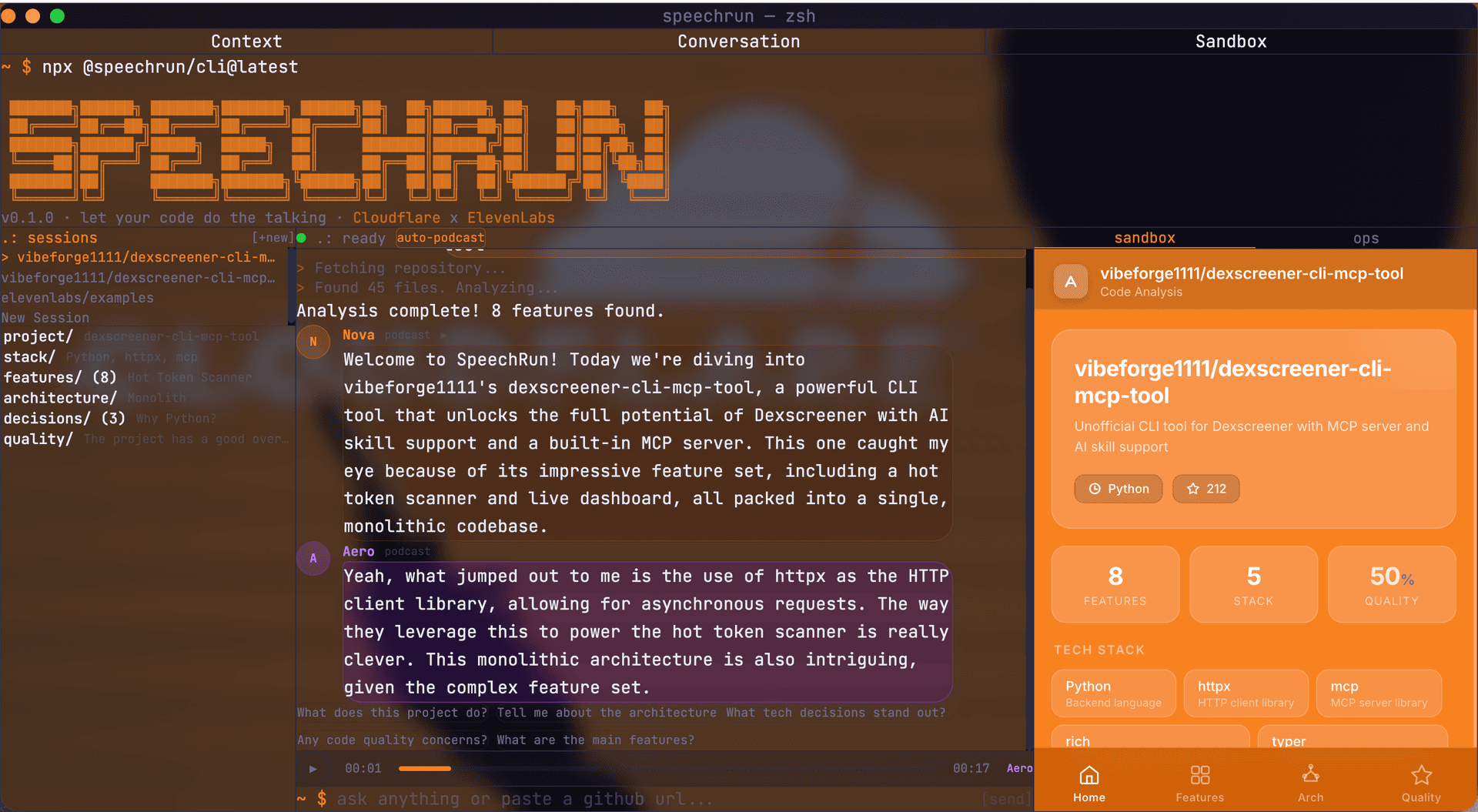

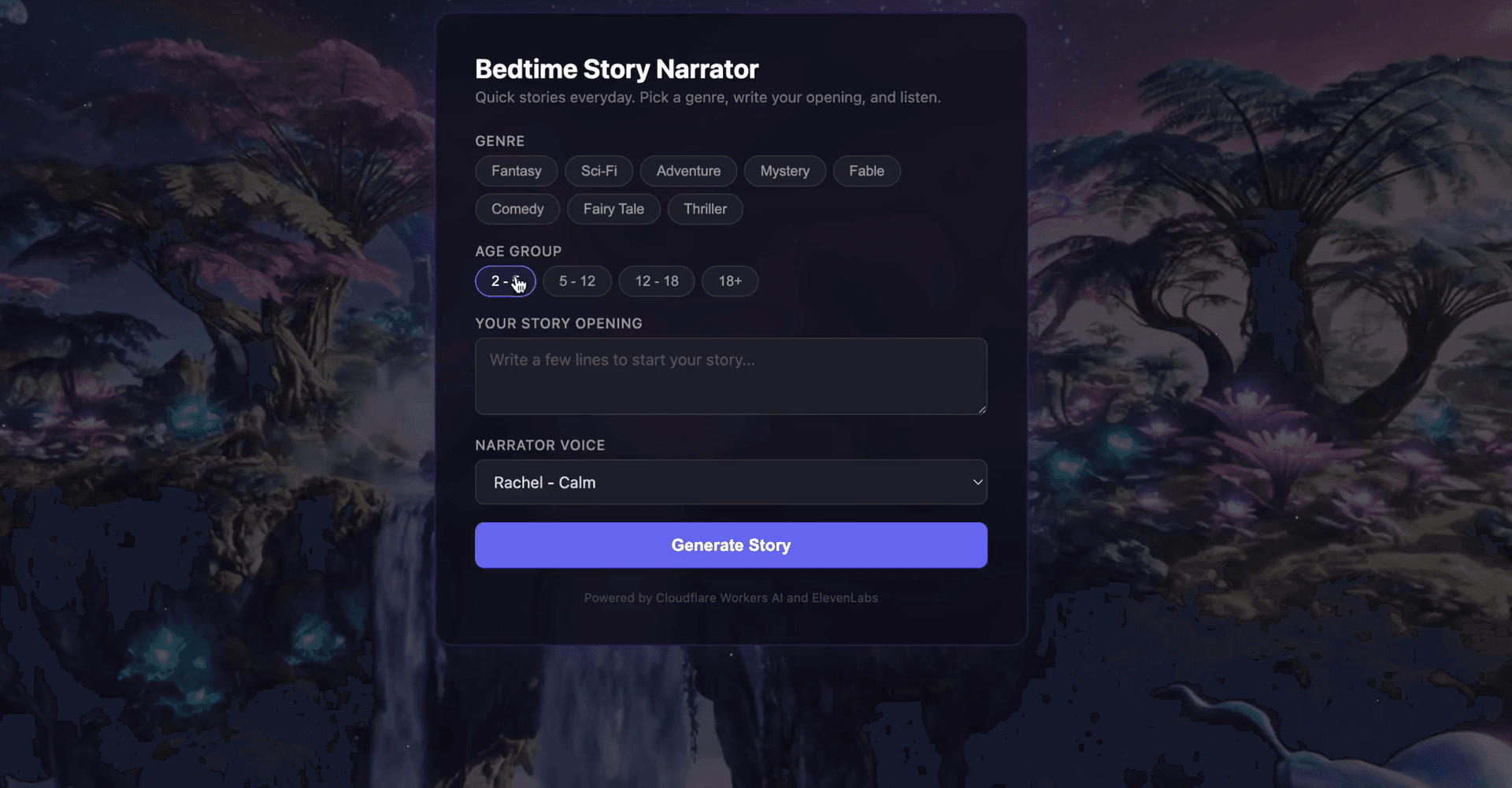

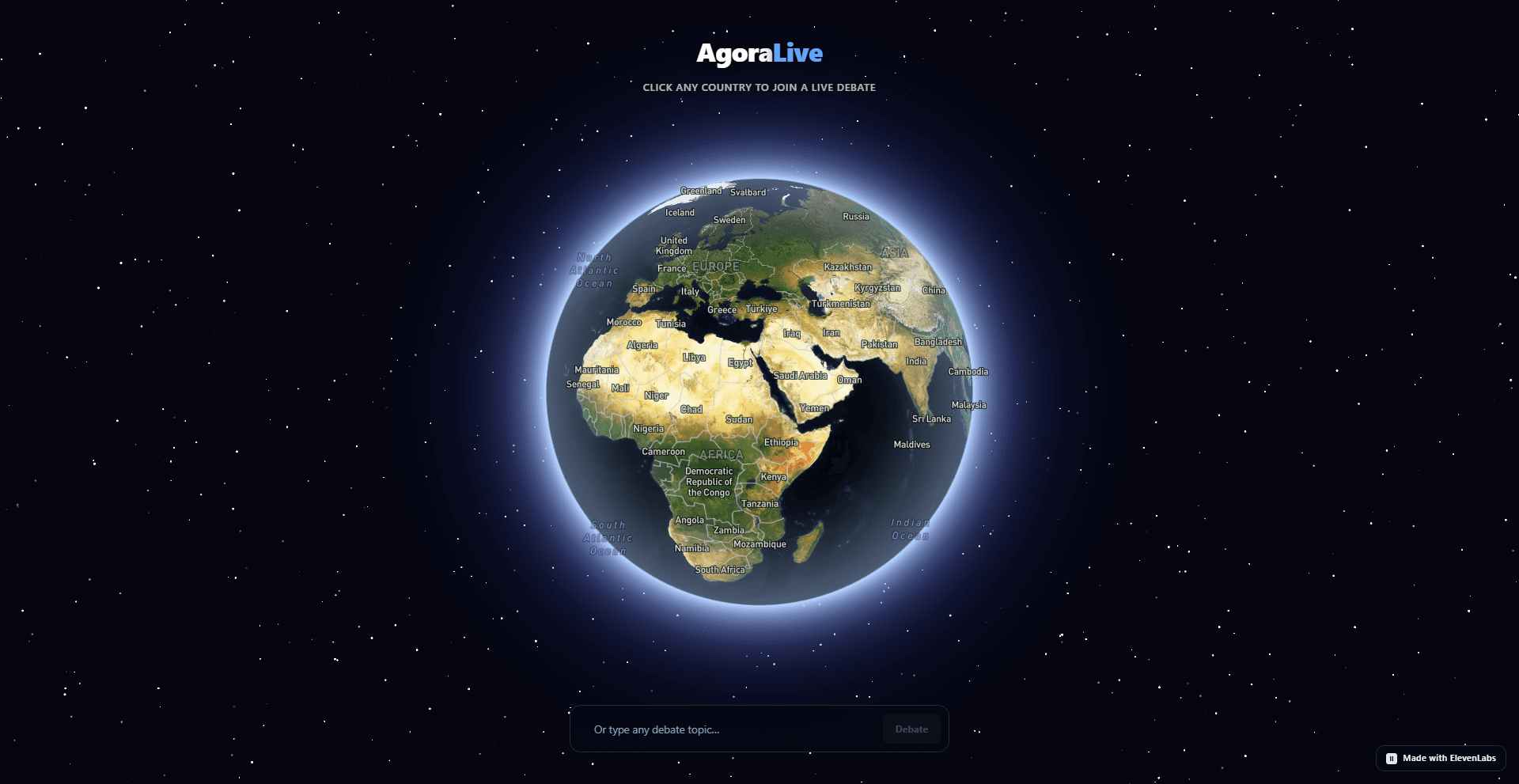

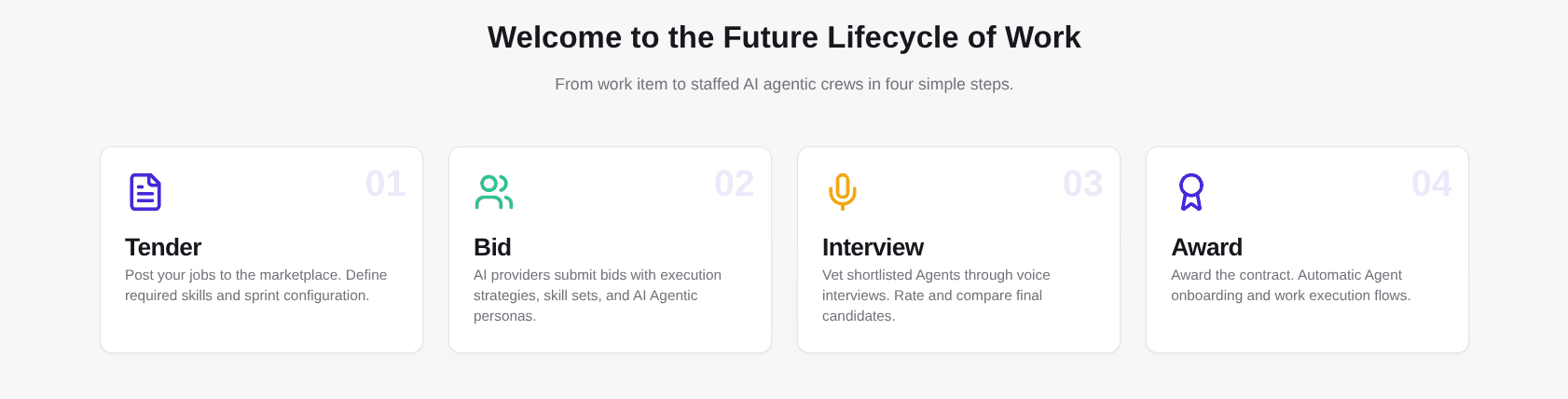

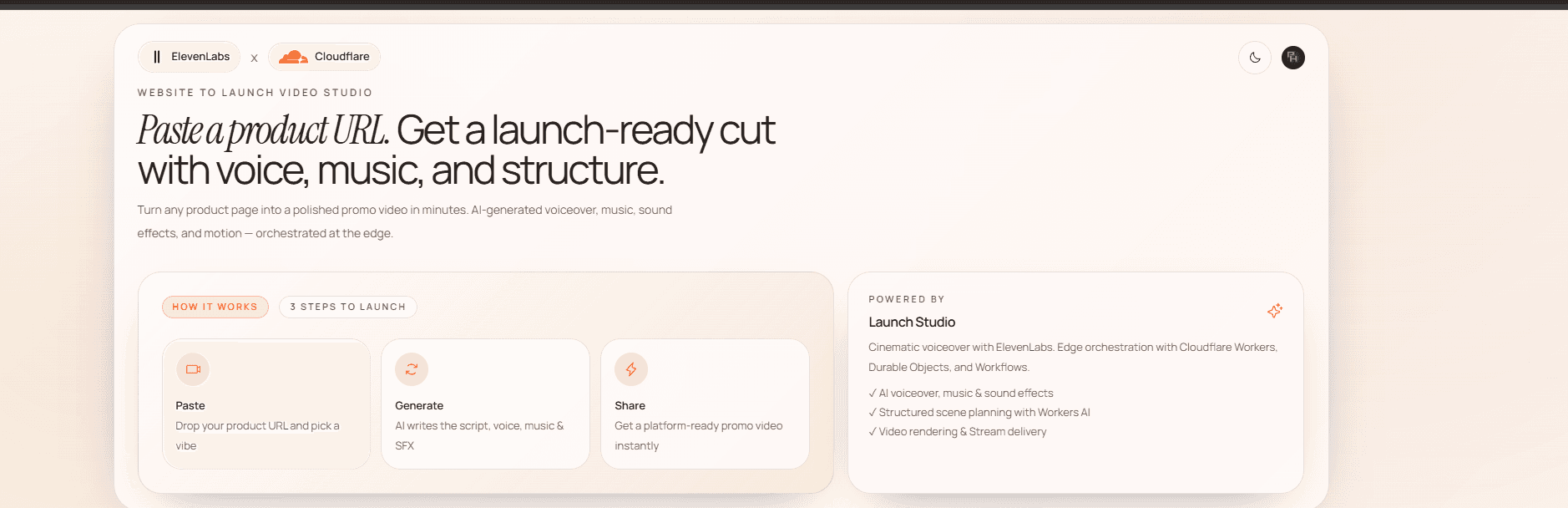

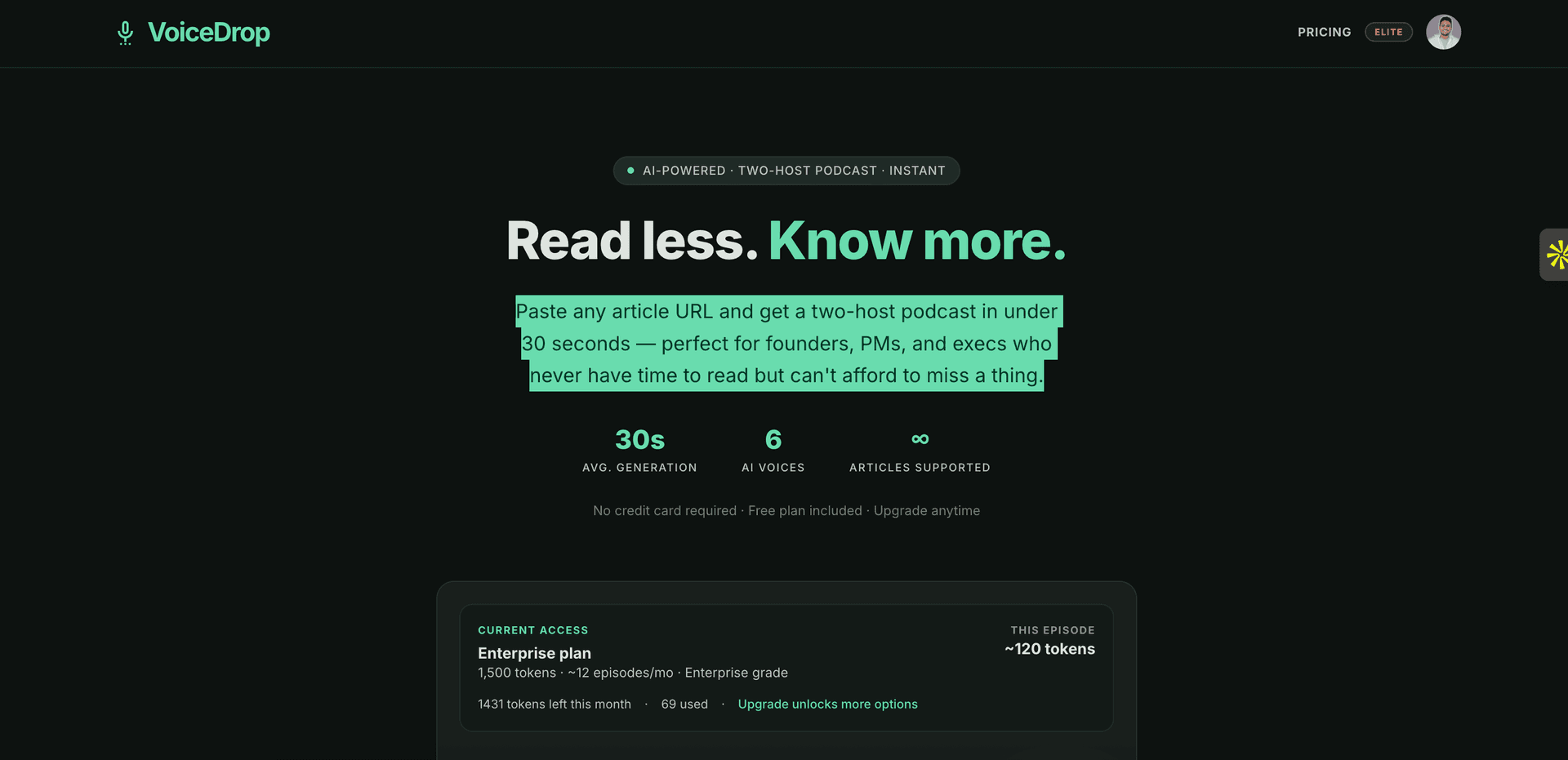

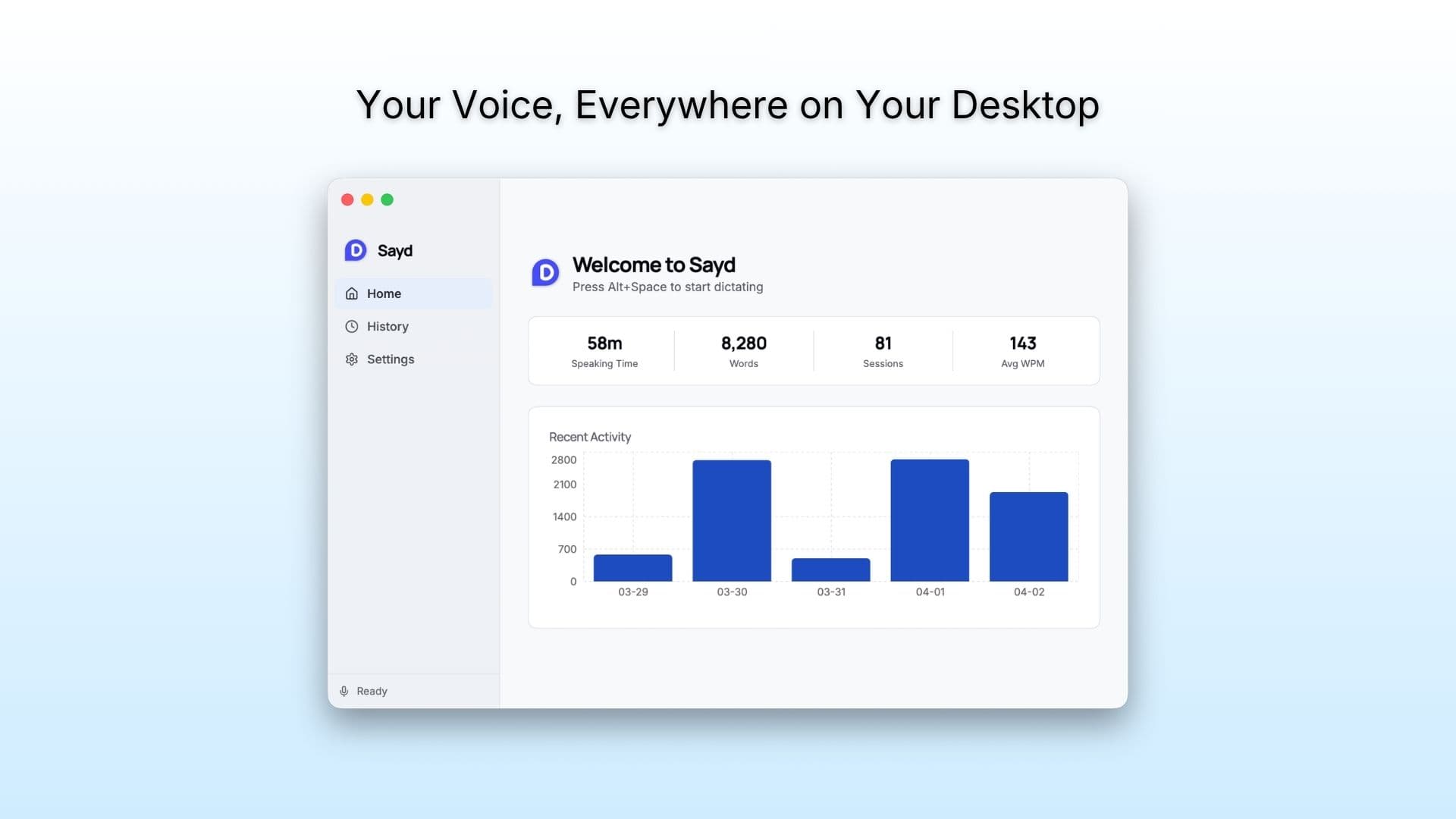

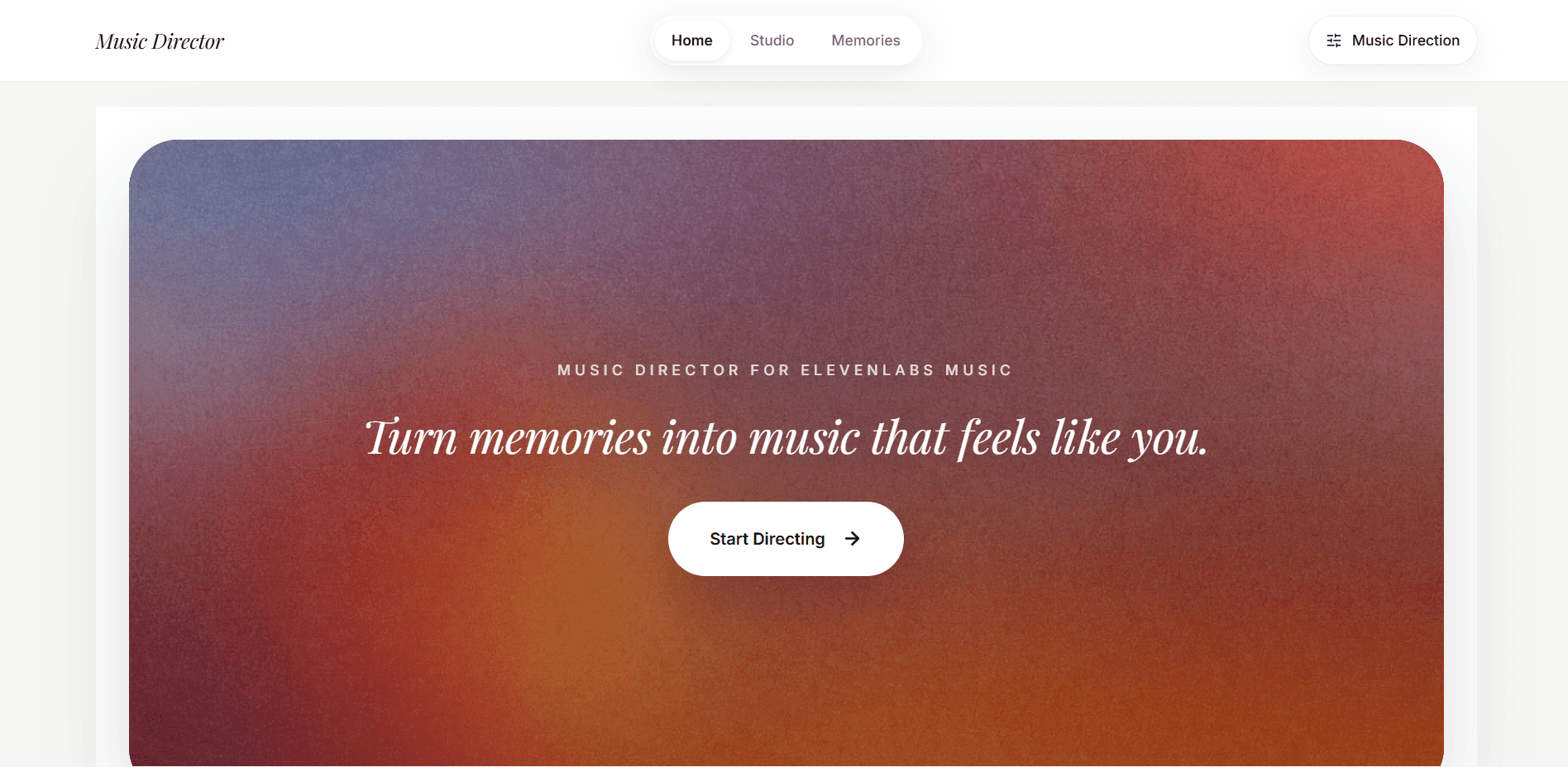

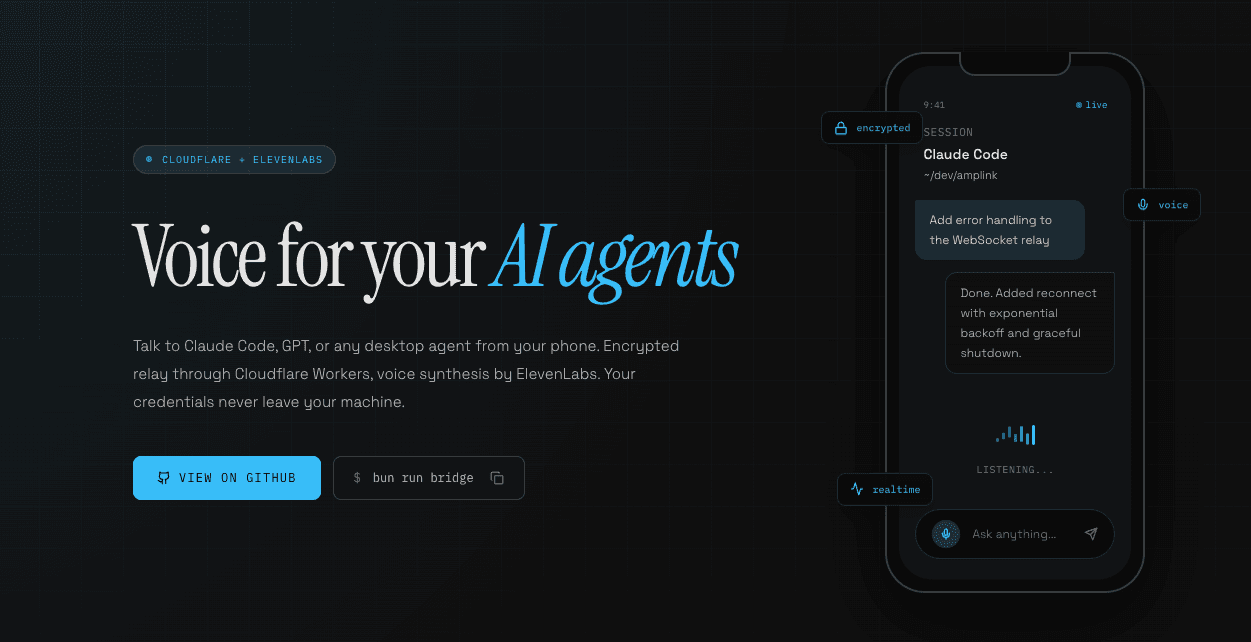

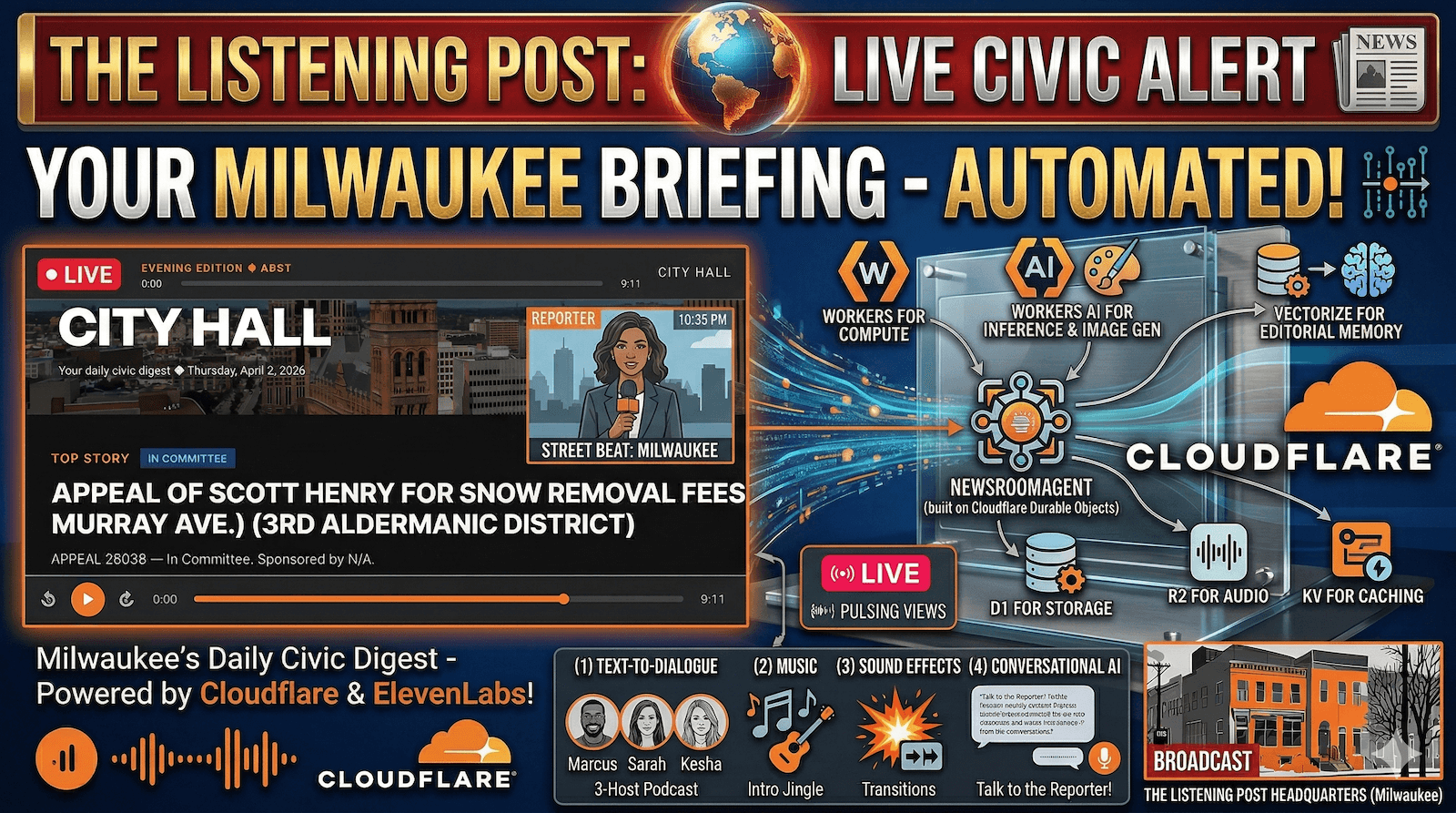

Build an AI-powered app using both Cloudflare and ElevenLabs' developer platforms

Prizes

$131,980 total1st Place

$100,990$100k in Cloudflare credits

3 months ElevenLabs Scale ($990)

2nd Place

$25,660$25k in Cloudflare credits

2 months ElevenLabs Scale ($660)

3rd Place

$5,330$5k in Cloudflare credits

1 month ElevenLabs Scale ($330)

Build something creative with Cloudflare's developer platform and ElevenLabs, then submit a high-quality viral-style video demonstrating what you've built.

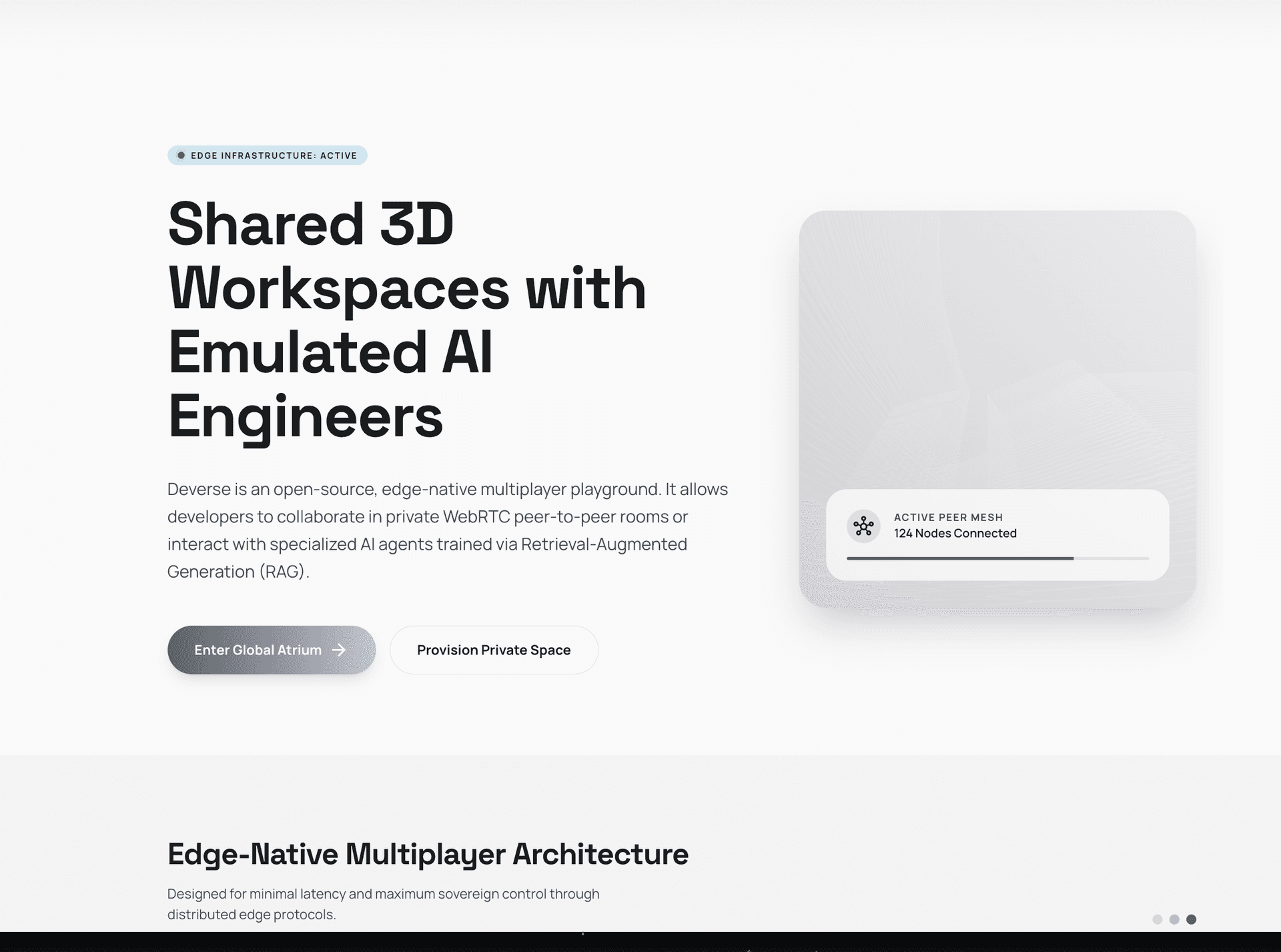

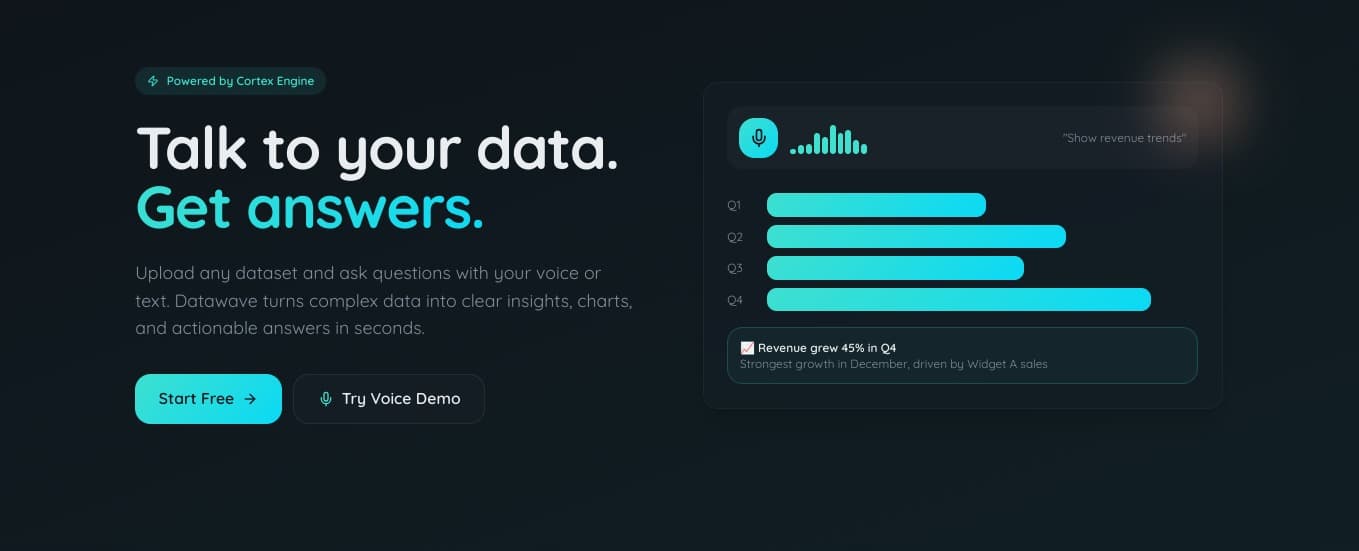

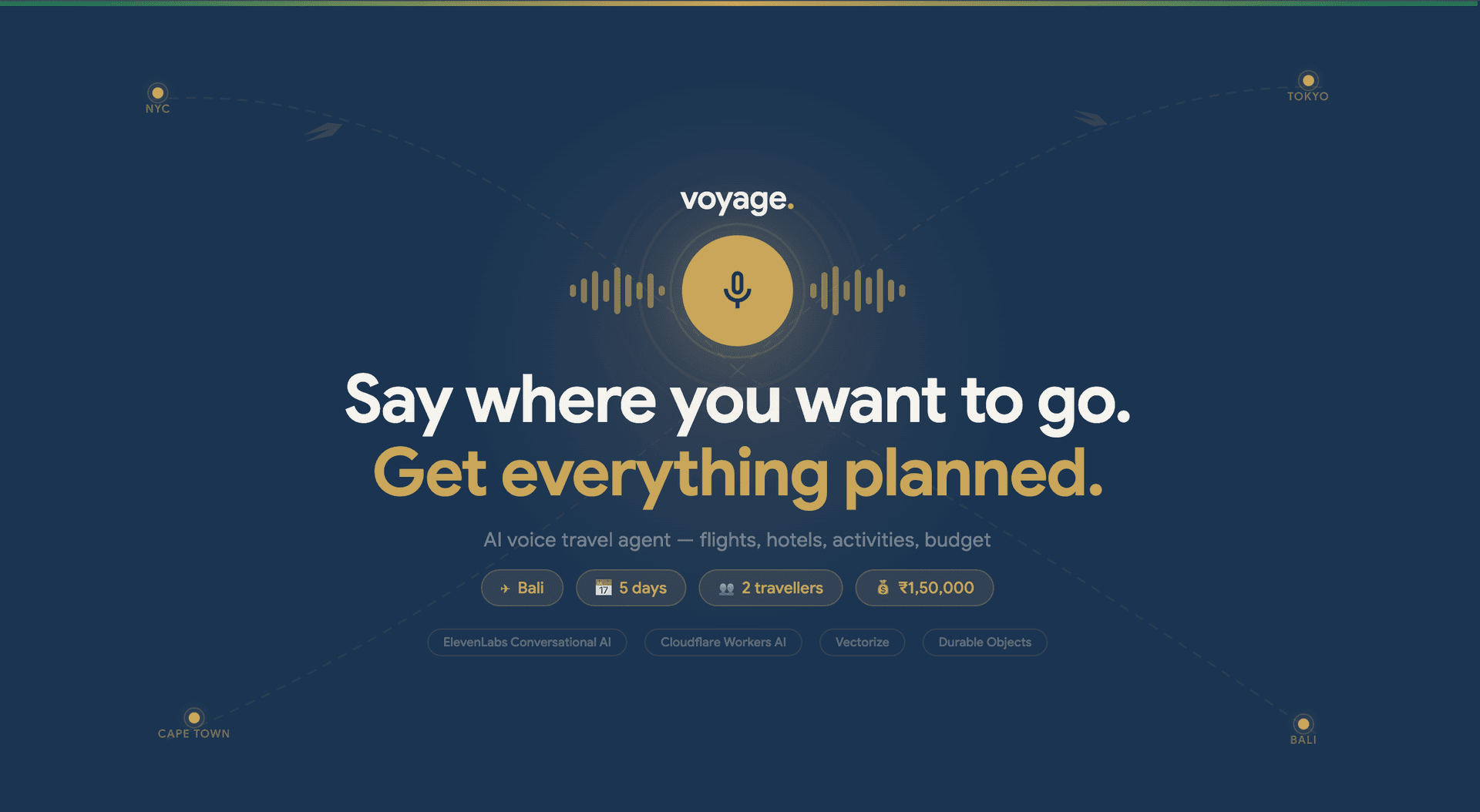

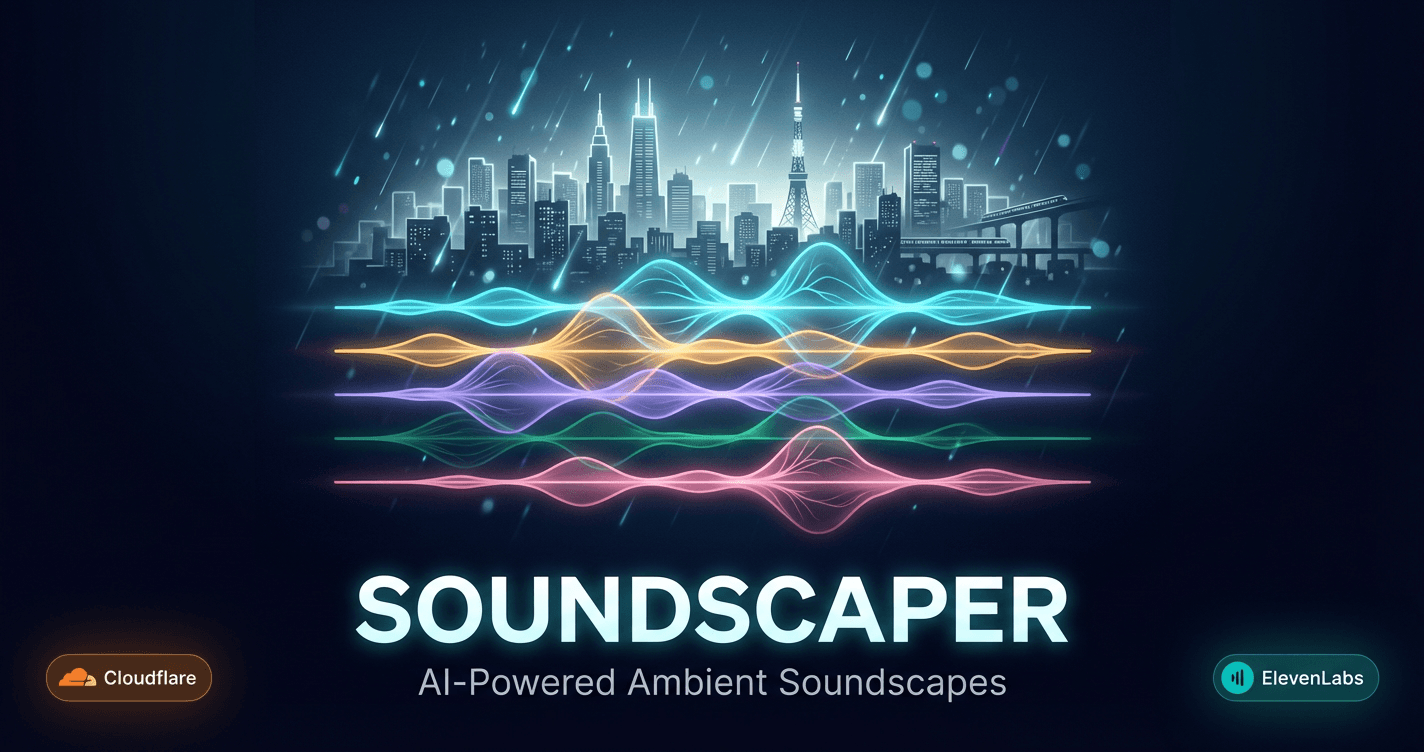

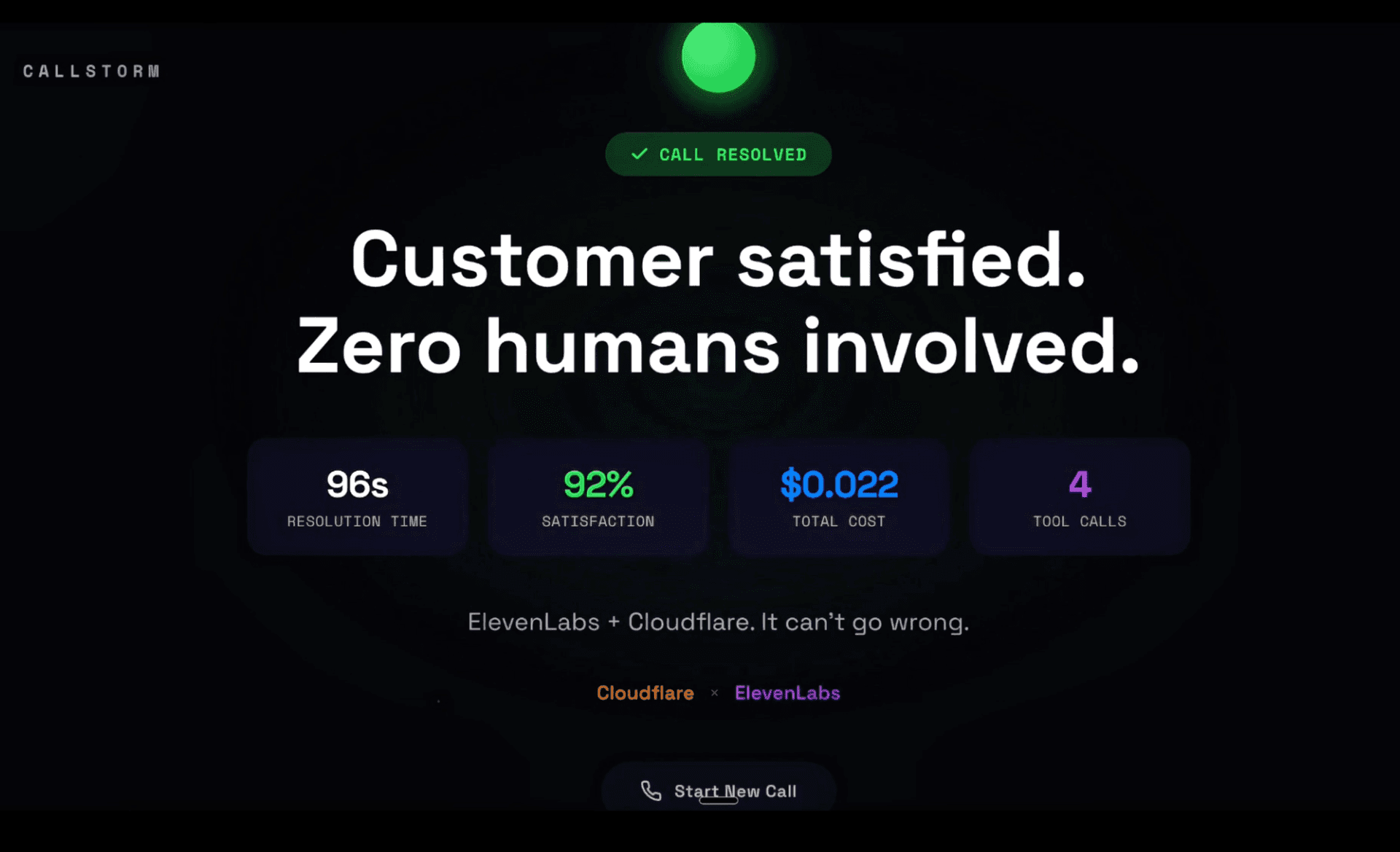

Cloudflare Agents is the platform for building AI agents. Build agents on Cloudflare with durable execution, serverless inference, and pricing that scales. Use Workers for compute, Workers AI for inference, Durable Objects for state, and tools like Browser Rendering and Vectorize.

ElevenLabs offers state-of-the-art voice AI including text-to-speech, voice cloning, and conversational AI agents. Combine Cloudflare's edge infrastructure with ElevenLabs' voice capabilities to build something unique.

What we're looking for

We're most excited to see creative use of Cloudflare Workers, Durable Objects, and a combination of ElevenLabs APIs. Show us what's possible when you push Cloudflare's infrastructure to its limits.

For less technical participants who aren't able to experiment with the Cloudflare APIs as much, we're still keen to see you submit something deployed on Cloudflare. Use tools like Cursor, Claude Code, Zed, or another AI-assisted coding platform to help you build and deploy something to Cloudflare Workers/Pages.

Getting started

The Cloudflare Workers free tier is very generous and offers almost all the functionality of the paid tier, so you can get started without any cost.

Resources

- ElevenLabs × Cloudflare starter kit

- Cloudflare Workers docs

- Workers AI docs

- Cloudflare Agents docs

- ElevenLabs docs

Tag us

When posting your submission on social media, tag @CloudflareDev and @elevenlabsio and use the hashtag #ElevenHacks.

Scoring

- Social posts: +50 pts per platform (X, LinkedIn, Instagram, TikTok)

- Placement: 1st place +400 pts, 2nd +200 pts, 3rd +150 pts

- Most Viral: +200 pts for the post with the most engagement

- Most Popular: +200 pts (community vote via emoji reacts)

Attendee offers

1 month ElevenLabs Creator

Free month of ElevenLabs Creator plan for all attendees

Sign in to claim this offer

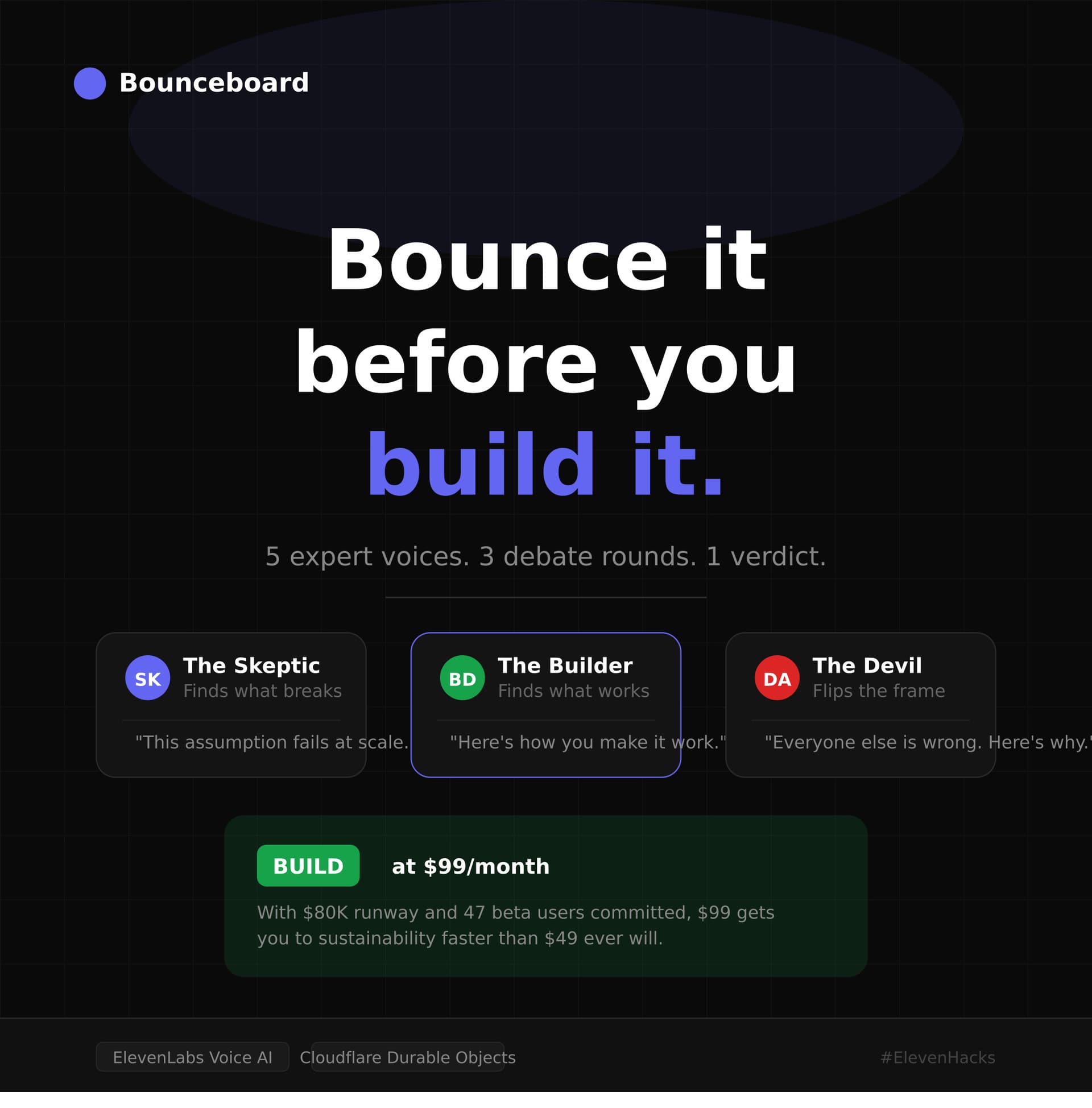

Submissions (87)

J

Joaquin

2 Apr, 14:11

🏆1st

Case 47: The Last Night is a noir detective game where players interrogate AI-powered witnesses to solve a murder mystery. Each of the suspects lives in its own Cloudflare Durable Object, giving them persistent memory and a consistent personality across sessions. As the interrogation evolves, ElevenLabs voice parameters shift dynamically based on the character's detected emotional state — a cornered suspect sounds genuinely different than a calm one.